I’ve always found calculus a little trippy. How can “infinitesimally small change” be a real thing with such bizarrely precise implications? I’ve used calculus in my work as an economist, but I’m not a big math nerd and I don’t typically revisit the proofs underlying the formulas. So one night on a whim about six months ago I sat down and asked ChatGPT to remind me why dx^2/dx = 2x. Within a few minutes, I was hooked.

Here I was at home on a random evening and I had free, on-demand office-hours with a math tutor that understood and answered my questions, didn’t judge me for my ignorance, gave unlimited clarifications and examples, ran with any oddball tangents I requested, and never got distracted by its cell phone or any other personal or professional obligations. Sometimes I hear about VIPs like Bono or Obama getting interested in things and calling up world-class experts for on-demand personal tutoring. From now on, that would (almost) be me and you and anyone else who liked asking questions.

So I love AI and I use it all the time. And most of the big concerns about AI do not (yet) really freak me out. I’m not that worried AI will destroy the world, cause mass unemployment, or plunge us into disinformation oblivion.

However, I am worried about one big AI question, and that is how it will impact inequality.

The invisible trust fund

I’m worried about AI’s impact on inequality because historically high inequality is already causing a lot of problems. The relative explosion of incomes among the top 1% (really the top 0.1% and 0.001%) and the shift in national income shares away from workers and toward business owners are important. But what might be even more important — because it’s so much more palpable in normal people’s lives — is the contrast between healthy income growth for college graduates and tepid or even negative real income growth for less-educated workers.

Today richer kids wind up getting a kind of invisible trust fund. It’s not the usual pile of stocks or family businesses or homes that we associate with the term “trust fund” and that only a small share of kids ever get. Rather, it’s a far more common trust fund that takes the form of college readiness, and it’s now worth close to a million dollars in lifetime income, doled out helpfully in the form of higher earnings every year of one’s life along with greater health, job satisfaction, and family stability.

To understand why AI raises some concerns about this kind of inequality, it helps to step back and ask why college degrees have become so insanely valuable.

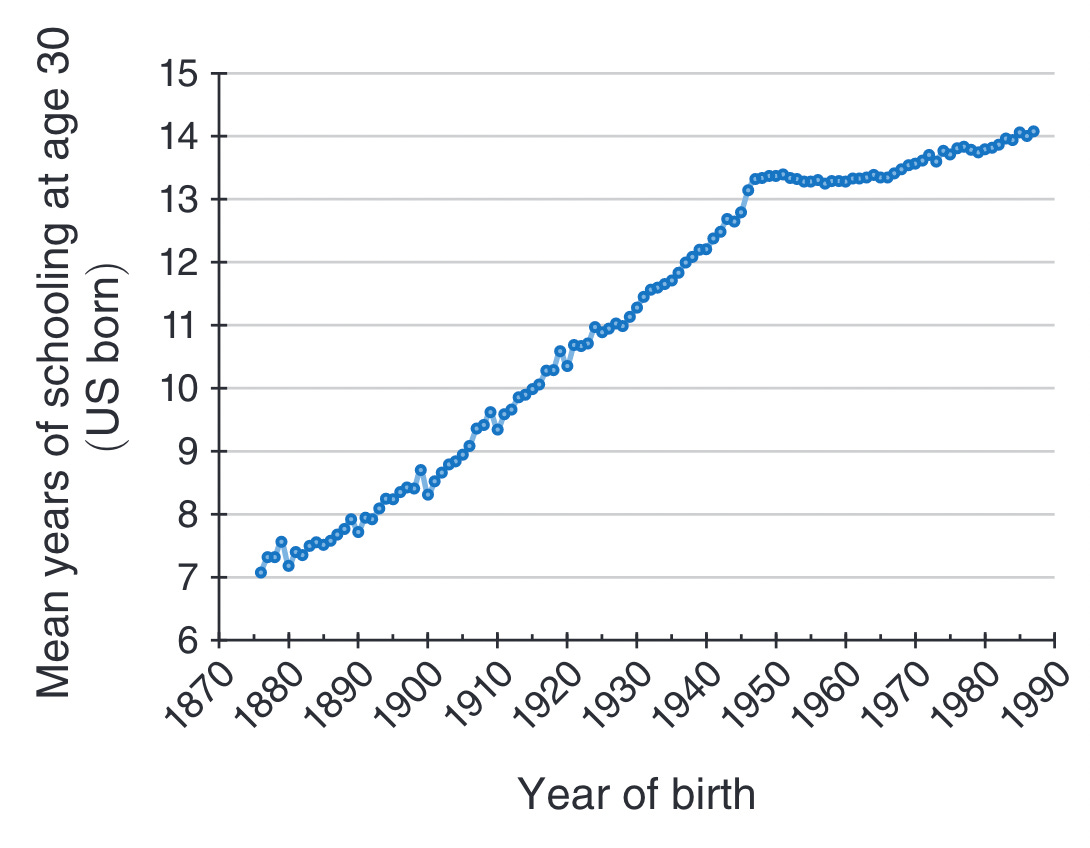

The first reason is about supply: we produce too few college graduates because the skill development opportunities required for kids to achieve college readiness and persistence place too large a burden on parents. This has made it hard for families to respond to rising college earnings premiums as swiftly as they responded to rising high-school earnings premiums in the early-to-mid-20th century. We made high school free and semi-automatic – and most families participated. We’ve made college expensive and anti-automatic – and poor families have struggled to play the game.

The second reason college degrees are so valuable is demand. Information technology in the form of computers, software, robots, etc. has automated-away a lot of jobs that previously furnished good livelihoods for high school graduates, while turbo-charging the productivity and earnings of highly educated workers.

As a result of rising demand and dysfunctional supply in the market for college-level skills, our inequality situation has gotten a little Hunger-Gamesy. Declining fortunes of less-educated workers have contributed to a growing sense that the whole social-economic-political game is rigged in favor of the rich, and to widespread doubts about core American principles such as “democracy” and “meritocracy” that I personally had always taken for granted as sacrosanct (as principles, obviously, not as actual practices) until recently.

So what the heck is AI? Is it just more and better information technology pouring gasoline on the inequality bonfire? Or is it actually quite different from prior information technology in ways that may decrease inequality?

A race car for the mind

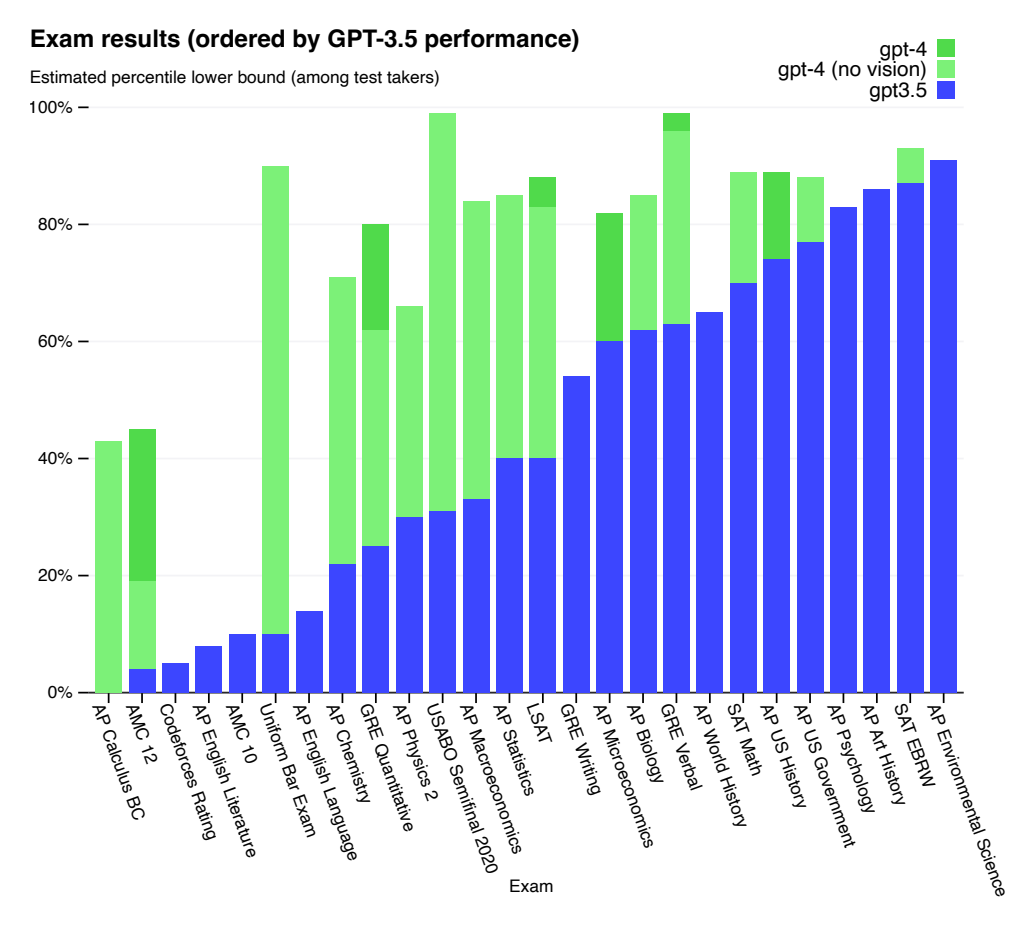

There is a chance AI could reduce the college earnings premium. AI can already write, edit, brainstorm, analyze, argue, search, and code better than a large share of college-educated humans in some ways, and it’s improving over time. If employers can just ask AI to do all these tasks, college-earnings premiums could fall back toward the more modest levels that prevailed in the decades after World War II.

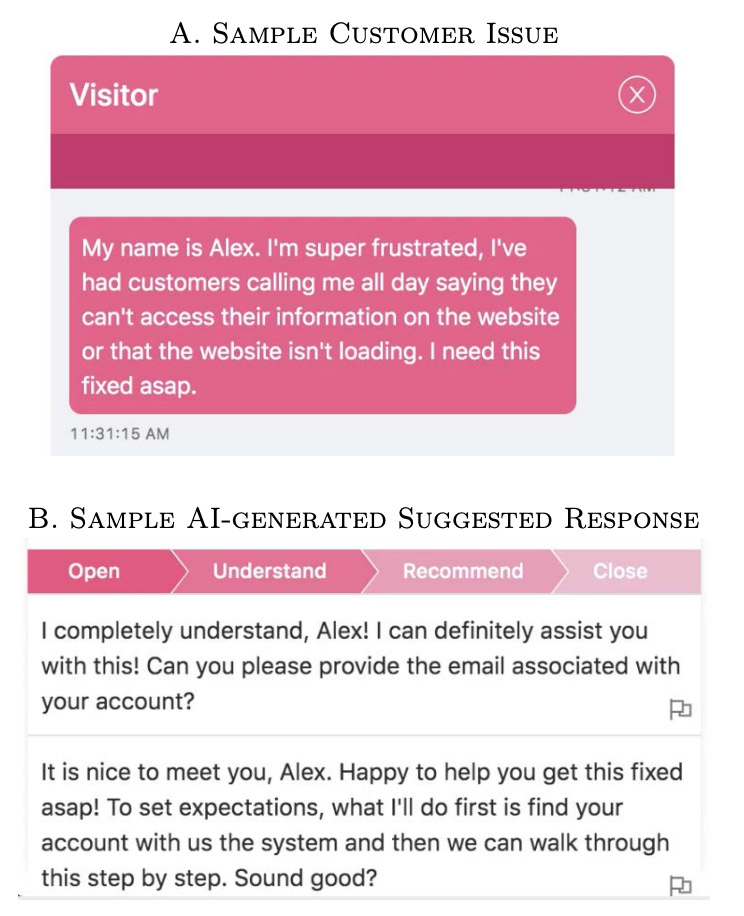

Some early research supports this line of reasoning. In one nice study by economists Erik Brynjolfsson, Danielle Li, and Lindsey Raymond, AI assistants narrowed performance gaps among customer support agents by leveling up the lower performers. Other studies have found similar equalizing impacts of AI. But it’s far too early to know how things will play out over time. We might have found similar equalizing effects of, say, digital spreadsheets on the performance of accountants and stock brokers in the 1980s, which would have been very misleading about the ensuing inequality explosion.

In fact, I’ll bet that over the next 30 years AI will favor higher-skilled workers, and thereby tend to make inequality worse. Any time someone says “I’ll bet,” you should ask how much? My answer is not that much, because I’m not delusionally confident enough to pretend I foresee the future of AI. But here's what I’m thinking.

Yes AI can write papers, it can code, it can analyze data. But that just makes people with good ideas for papers, software, and data projects – and people with the ability to evaluate and build on AI’s imperfect work – that much more productive. People with these skills are scarce. Steve Jobs described the computer as a “bicycle for the mind.” To me AI feels like a race car for the mind – not a replacement for the mind.

If AI does weaken job prospects for college graduates, I predict it will be only those with the weakest skills. That could shift attention away from college graduates overall, and toward an even more rarefied group of elite college graduates, or college graduates with elite majors. These people will in turn come from even more advantaged families – not so much because of relatively minor institutional biases in elite college admissions – but because elite-college and elite-major readiness require yet more advanced opportunity orchestration by parents. Maybe this group will be small enough to escape the “game is rigged” backlash that has accompanied the broader college/high-school divide. Maybe not.

At any rate my level of certainty here is not important – there’s clearly a good chance that AI will exacerbate inequality. We can’t afford more inequality. And we shouldn’t take bets that we can’t afford to lose.

So what should we do?

The race between AI and HI

One thing we shouldn’t do is put the brakes on AI. We need AI. AI has enormous value for science, space travel, health care, education, logistics, and pretty much everything else we care about – especially military defense. Losing international dominance in AI would pose huge risks. Even if we think AI may be extravagantly dangerous, it seems even more dangerous to let authoritarian countries pull ahead in this area.

If we can’t slow down AI, what’s our best option? The key is to realize, in the spirit of economists Jan Tinbergen, Claudia Goldin, and Larry Katz, that AI’s inequality risk is not only about AI. It is about the balance between artificial intelligence and human intelligence – the race between AI and HI.

The problem with computers has not been that they made HI more valuable. The problem has been how hard we’ve made it for families to respond to these incentives and increase the supply of HI. If AI makes HI even more valuable, then the simple answer is to take some of this burden off of families by stepping up our public investments in HI.

Going big on HI

What we need is a New-Deal or War-on-Poverty level investment in HI. This is exactly the kind of investment I’ve sketched out previously under a label of Familycare as a nod to Medicare.

Familycare would make skill development for poor kids look a lot more like skill development for rich kids. With Familycare, poor babies, like rich babies, would be cared for by parents with paid time off to cuddle, chit-chat, and read Goodnight Moon. Poor toddlers, like rich toddlers, would spend the workday in child care settings with small classes and good teachers skilled at edging out tantrums and conflict with words and deep breaths. Poor kids, like rich kids, would go to guitar lessons on Tuesdays and basketball practice on Thursdays; they would backpack in the woods and go to astronomy camps over summer break; they would get extra help from tutors when they fell behind at school; they would get a red carpet rolled out for their transition to college or other vocational springboards.

A Familycare-style investment in HI would cost a lot of money. Hundreds of billions of dollars, or about half as much as Medicare. But unlike Medicare, it would increase future incomes and tax revenue of the affected kids by so much that it could wind up paying for itself over time from taxpayers’ perspective. This means it wouldn’t necessarily be irresponsible to pay for Familycare with debt rather than higher taxes or lower spending – as long as global credit markets recognized the strategy and didn’t jack up interest rates on US public debt. That would be bad.

Familycare would also increase research on child development to a level commensurate with other major industries – about $100 billion annually. Speaking of the race between AI and HI, $100 billion is about how much American companies are projected to invest in AI research by 2025.

An HI investment on the scale of Familycare is our best defense against the inequality threats posed by AI. And how poetic, in a way, to safeguard ourselves against artificial intelligence by leveling up our own gloriously/pathetically human intelligence.

So what happens if I’m wrong? What happens if it does turn out that AI makes advanced HI relatively less valuable, attenuating rather than exacerbating inequality? Will the Familycare-fueled tsunami of high-skilled, college-educated young people feel betrayed as their talents get replaced by magical software, and their careers turn out only slightly better than those of people who skipped college? Could Familycare turn into a boondoggle?

Maybe!

But having an over-skilled, egalitarian population is a problem we can afford to have. It is not a Hunger Games inequality vortex where populist outrage overwhelms democracy and rule of law. It is, rather, a society in which rich and poor kids are far more equally ready to adapt to whatever AI (and quantum computing, and nuclear fusion…) throws their way, and far more equally represented in positions of authority to remake the institutional contours of a tech-disrupted world. As a parent, this feels like a society that I can imagine my own kids inhabiting with curiosity bordering on excitement, rather than fear or anger.

Anyway, this post has been a little dark and veers away from my stated goal to make thinking about child development feel more like thinking about golden retrievers. So I’m happy to preview that my next post will be a holiday rumination on the wonders of children’s books. Prepare for bunnies, bulldogs, and firetrucks.